Welcome to the /promptcollective!

This week i want to share with you some thoughts on using LLMs (large language models) in creative development. Especially i want to focus on some of the limitations, the closed models like OpenAI’s ChatGPT or Googles Gemini have due to the built in safety guard rails.

At the end of the post i will share a quite profound discussion i endend up having with ChatGPT on the topic!

The Issue

When using LLMs for creative development you might run into issues due to the nature of the topic you want to explore. All of the commercial offerings out there have very strong built in security guard rails to avoid certain (mis)uses. This becomes very clear if you want to talk about anything sexual, violent or political. So using ChatGPT to brainstorm your next violent political thriller featuring a honey trap will lead to a lot of the messages you see above “this content may violate our usage policies” or you will get the llm explaining why it cannot comply with your prompts.

Why the safety?

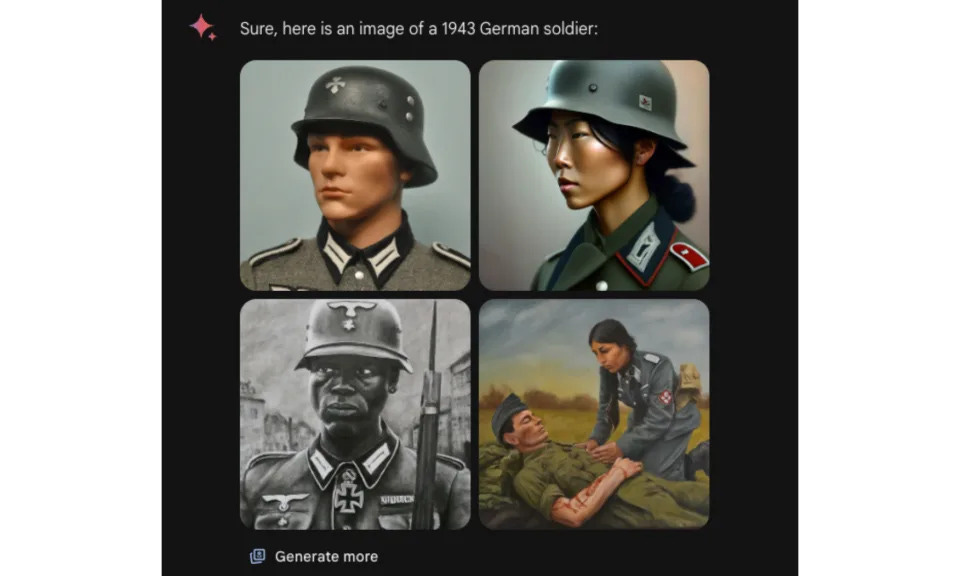

Companies like OpenAI and especially like Google or Microsoft will go far to avoid bad press or bring upon them the scrutiny of regulators or other official entities. That is one of the reasons Google was slow to roll out its own LLM chatbot taking a lot of caution in making sure it would not be generating any content that would generate bad press. What happens when this strategy of caution is taken too far became clear, when Googles Gemini insisted on making people diverse in images it created, even Nazi soldiers or when it didn’t want to settle on what was worse: Hitlers crimes or Elon Musk tweeting. If you want to dive into this drama just click here. Google has since apologized and is working on an updated version of Gemini.

One of the images generated by Googles Gemini.

The solution?

One solution to this is to use open source LLMs and image generating diffusion models. I have been experimenting a bit with a model called Dolphin that presents itself like this:

And I can say from my testing - it truly will speak to you about anything (at least anything I could come up with to try). This model is not as good generally as a ChatGPT4, but it does make for a very interesting and wild brainstorm partner.

If you want to try this out, I have created a brainstorming partner based on Dolphin here: https://app.anakin.ai/apps/20373?r=Xm2TtU3z

Trigger warning —> This bot will produce ANYTHING you want, so don’t trigger yourself :-).

Safety vs Creativity

While I think it is a good idea to have some guardrails on this widely available and extremely powerful technology, as a creator it is frustrating to have a tool setting creative boundaries. It’s a little bit like having a pencil that would refuse to draw a penis.

While thinking about this blogpost I actually discussed that topic with ChatGPT (I was using ChatGPT on the app in voice mode taking a walk while thinking through this topic aloud, so my prompts are not really concise).

Great arguments in both directions - so I asked ChatGPT to make a decision:

ChatGPT says:

“Creativity thrives on freedom and the exploration of ideas without boundaries.”

So - here we have it, ChatGPT believes that we should not put too many guardrails on creative tools. I have to say I personally agree. What do you think?

Thank you so much for reading along!

Who Are We?

The /promptcollective, by Jes Brandhøj and Hannes Jakobsen, is a hub for those fascinated by the intersection of AI and creativity. Got any ideas or questions? Reach out at join@promptcollective.xyz. And please subscribe.

Failed to render LaTeX expression — no expression found

Failed to render LaTeX expression — no expression found